CaregiVR: VR Simulation Platform for Nurse Training

Self-directed ergonomic training for nursing staff, without an expert coach present. Full-body motion tracking with real-time posture feedback and interactive 3D session review.

Nurses performing manual patient transfers are among the most injury-prone healthcare workers, with lower back pain and musculoskeletal disorders the leading occupational hazard. The established approach, Kinaesthetics-based transfer training, requires an expert coach present for every session. In practice, nursing students receive one brief course over three days across a three-year programme, and self-directed practice between sessions is nearly impossible without feedback. Incorrect technique, reinforced through repetition, becomes difficult to unlearn.

CaregiVR brings the coach into the headset. The trainee wears a Valve Index HMD and six HTC Vive body trackers, then steps into a virtual room with a patient lying on a bed. As they perform the transfer, the system calculates four ergonomic risk metrics in real-time from the tracker data and alerts them the moment any threshold is breached. After the session, they step back and watch a full 3D reconstruction of their own movement, with an error-annotated timeline and contextual tooltips anchored to the exact body parts involved in each mistake.

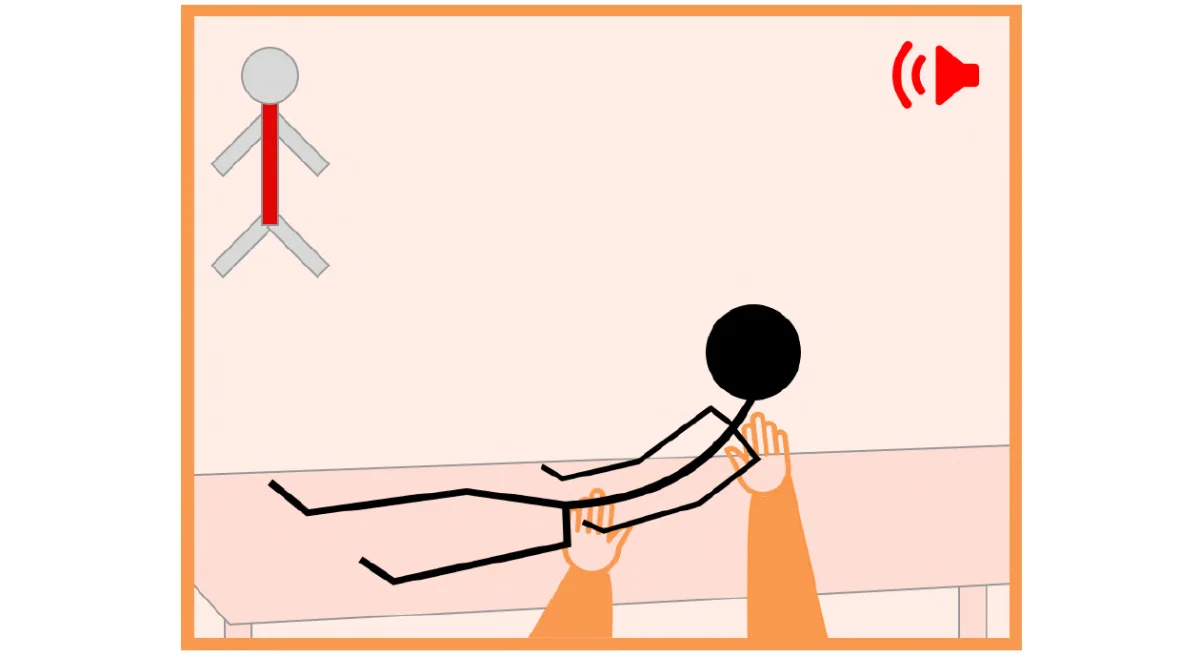

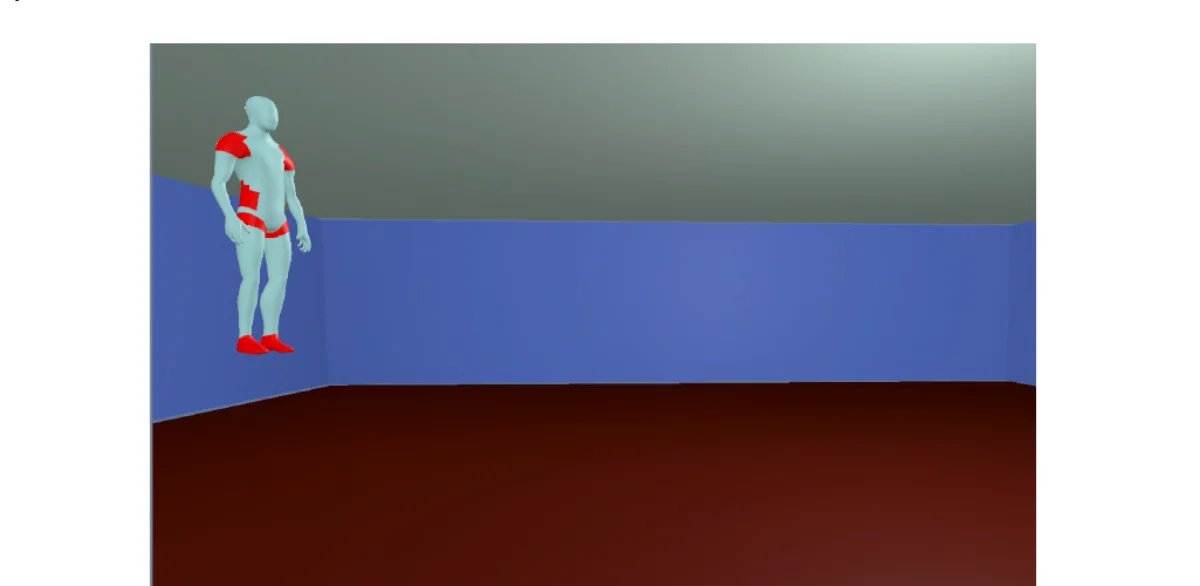

Real-time posture monitoring

Four biomechanical risk metrics tracked live at 200 Hz. When any metric exceeds its ergonomic threshold, an audio cue fires and an avatar in the trainee's headset highlights the affected body region in red, letting them self-correct mid-movement.

Key Capabilities

- Support base width: distance between the two feet

- Squat positioning: pelvis elevation from the floor

- Spine bend: L5-T8 vertebral angle via Law of Cosines

- Spine twist: shoulder-to-hip vector delta angle

- Audio-visual multimodal alert on threshold breach

- Tag-along avatar overlay stays in the trainee's field of view

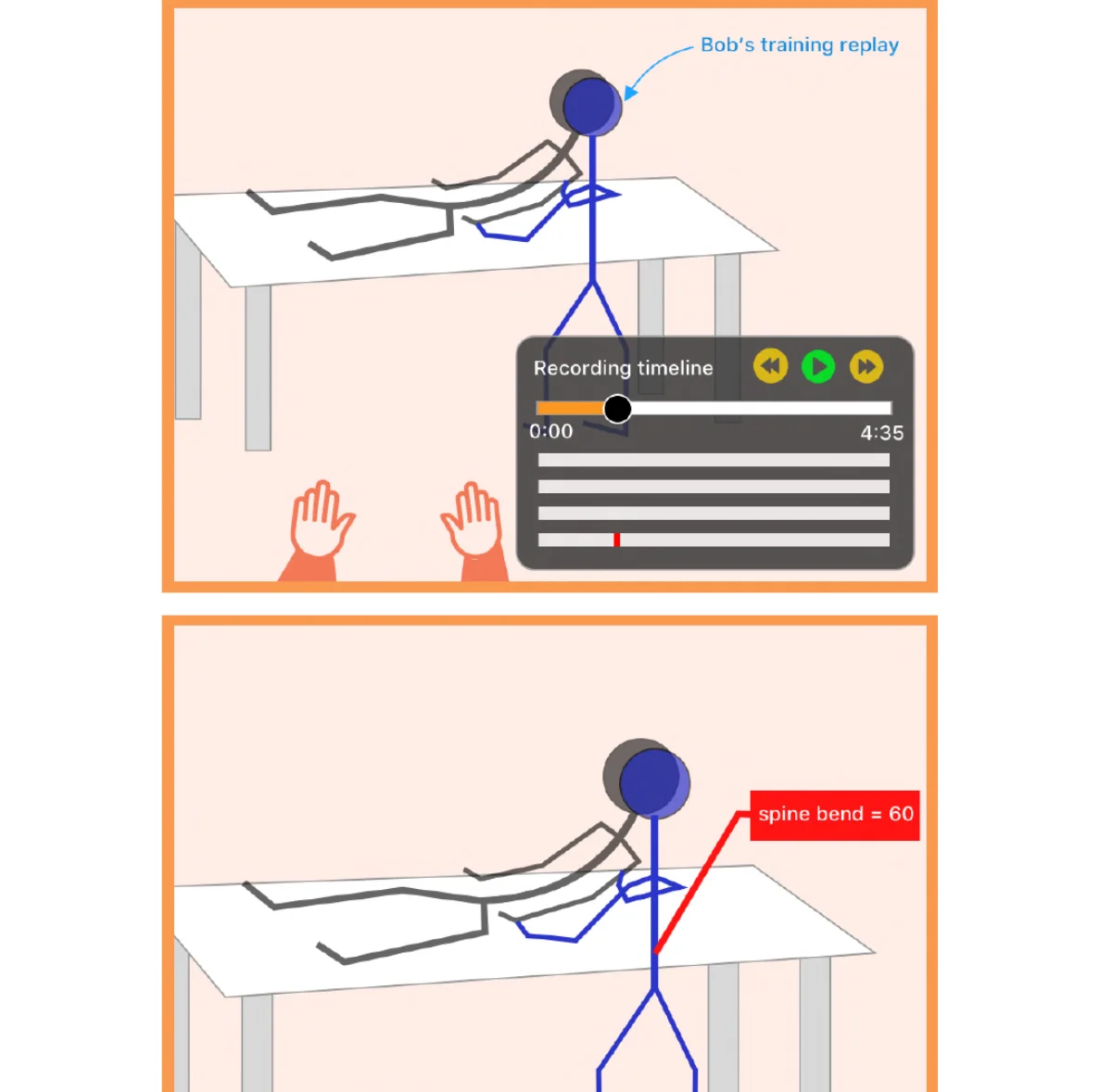

Interactive 3D movement review

After completing the transfer, the trainee steps back and sees a full reconstruction of their movement playing in the same VR space. An animation controller with error markers on the timeline lets them jump directly to each posture mistake. Context-sensitive tooltips anchored to body parts display exact metric values.

Key Capabilities

- Key-frame animation reconstruction from recorded tracker data

- Error-marker overlay on the playback timeline

- Spatial tooltips anchored to avatar joints (e.g., "spine bend = 60°")

- Free-roam review from any perspective in the VR space

- IK-driven humanoid avatar for accurate body shape reproduction

- Play, pause, and jump-to-error controls via animation controller

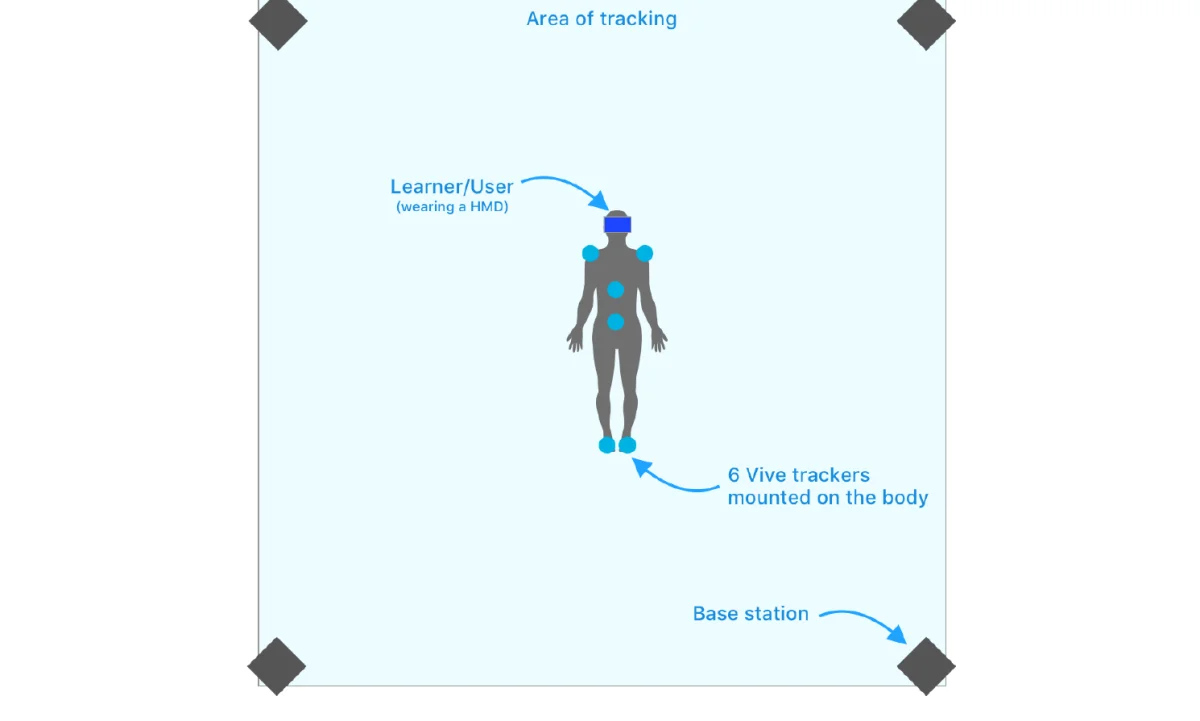

Sparse tracker fusion

Six HTC Vive trackers mounted at the feet, pelvis, T8 vertebra, and shoulders provide the eight virtual data points needed for all four risk metrics. Hip positions are extrapolated from the pelvis tracker at calibration, reducing hardware requirements without sacrificing metric accuracy.

Key Capabilities

- 6-tracker body rig using TrackBelt and TrackStrap mounts

- ±1 cm positional precision at 200 Hz capture rate

- Four-base-station SteamVR setup for full occlusion resistance

- One-time calibration retained across sessions

- Native SteamVR coordinate space, no translation layer required

- VRTK-compatible standalone module architecture

- Sparse tracker placement: only six trackers cover eight required data points. Hip positions are derived by extrapolating from the pelvis tracker's position and rotation at calibration time.

- The spine bend angle is not directly observable from tracker positions. It requires constructing a triangle from the L5 and T8 tracker positions plus an extrapolated vertical reference point, then applying the Law of Cosines.

- Spine twist calculation requires translating shoulder and hip axis vectors to a shared Y-plane before computing the angular difference, compensating for height offset between the two pairs.

- Movement recording at 200 Hz stores 9 transform parameters per tracked gameobject per frame. Accurate playback requires re-driving an IK skeleton from this sparse data without introducing temporal jitter.

- Context-sensitive tooltips in the terminal feedback phase must stay anchored to moving avatar body parts while the user freely walks around the reconstruction, requiring per-frame billboard recalculation.

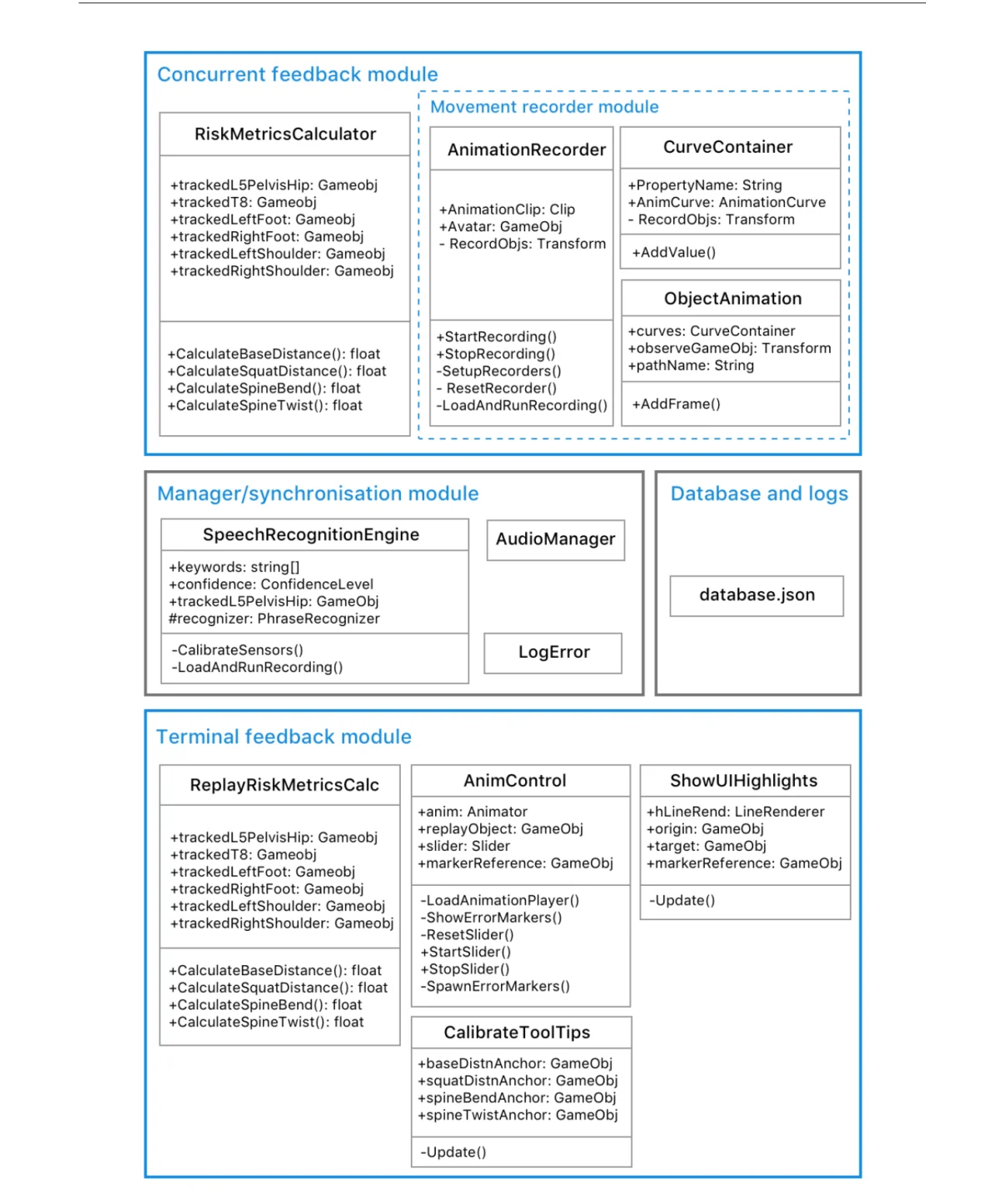

- The concurrent and terminal feedback modules share movement data through a manager module that synchronises recording start, stop, and playback trigger events across independently running components.